Zombie Statistics, Part 2: The Breadcrumbs of Memory and the Habitual Mythology of Bias

by Steven S. Vrooman

I've written before about our often sad research skills and our love of just citing things from the magic internets.

At this moment I am teaching a Professional Speaking class. 4 hours a day. 2 weeks. It's like speaking boot camp. Here's a story from the class that illustrates some of the flaws in how we think about gathering digital knowledge.

It starts with a crumb of knowledge and then we follow the trail. What could go wrong?

In a conversation about the need for transitions, previews and summaries, all the connective cues that helps audiences follow as we speak, a student, a psychology major, suggested that we can only remember "seven, plus or minus two" things at a time. She did not cite the source of that, and other students nodded at hearing, once again, numbers that had become a kind of "received knowledge" about the world. There is a powerful effect from hearing, again, a number you've heard before. It begins to take on the weight of fact, even if we don't really know if it's true, what Charles Seife, with perhaps apologies to Stephen Colbert, calls "proofiness."

Of course, that kind of persuasion is not really durable. That sort of persuasive cue does not really engage the brain. It is what various two-stage models of persuasion call either "peripheral" or "heuristic" information. Think about the utility of that kind of thing for difficult persuasive tasks like. . .

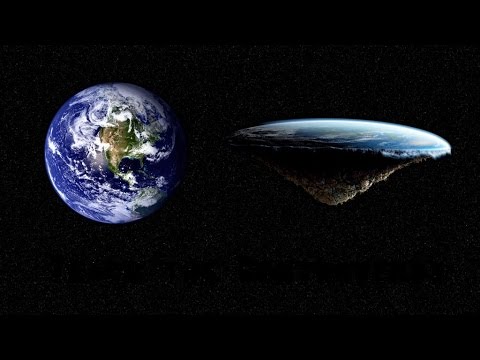

. . . convincing the audience that the Earth is actually flat. I might take zombie Internet stats for truth if you are telling me what I already believe, but in other cases, no way. You've got to have an answer when your audience starts to get angry like every public meeting in Parks and Recreation.

One student clearly liked that idea and wanted to use it for her speech. Of course, depending on how you Google that, you end up with all sorts of vague things. This student, in her first speech draft, cited "human-memory.net." Okay. Dot-net sounds legit, yeah? She got docked some points on her grade.

Curious, I went to the site and first noted the "Seguin, TX Residents Are 'Rattled' By New Public Records Website" ad embedded in the top paragraph and the "Chakra balancing" ad at the bottom. More legit by the second! Looking further, there's not a citation on the page to any of the research that generates the various "facts" about memory. All the blue-underlined links are to other pages on their site.

I suggested that this site was not credible enough and she was shocked when I told her to go to Wikipedia. She looked like:

Yep. What we learn in high school is:

Of course, quotations are the LEAST effective kind of evidence for an argument, but it is easy to grade sophomore English essays built on quotes when grumpy faculty are coming in to fill out rubrics over the summer, so we might as well ruin the analytic skills of a generation of students . . .

Wikipedia is as good as the edits. Look around. Look at the MANY CITATIONS ON THE BOTTOMS OF PAGES. See if they are any good. For some things Wikipedia is great, like the persuasion theory sites I linked before, or the history of Disneyland's Pirates of the Caribbean ride, Cicero's De Oratore, the Berlin Defense in chess, RCA's Photophone or the inflationary universe model of cosmology, especially if you are using it to investigate those citations on the bottom.

In this case, the Wikipedia page for "The Magical Number Seven, Plus of Minus Two" is worth more than any of the sites a Google search would give you in the first 3 and a half pages of the Google list (on the day I wrote this -- it may be better or worse on your day if you try it).

How can you know that? Well, the G. A. Miller study from 1956 that generated this number is cited right off the bat! And there are 15 more cited articles from the testing and development of that idea as it developed over the past 60 years, of which 13 are peer-reviewed.

You can easily find the pdf of the original article plus the arguments and problems with the theory.

Sometimes following the trail is easy, as long as you set aside your habitual mythology of bias.

And, in this case, you can not only get the peripheral/heuristic bump from giving us numbers, the the citation credibility bump from citing a few impressive sounding journal titles. Plus, Miller was at Harvard when he wrote it, which is at least better than average as an institution of higher learning, wouldn't you say?

Of course, I would suggest a better search to begin with, perhaps in a peer-reviewed psychology database, to generate a more nuanced analysis of this figure, but that would in fact be more work, so there's that. But good research does not always mean more work. It is just smarter, better work. More work is always possible, and more work, in the right places, usually does lead to better. But trying to do less work by hopping on the first site in the Google list does not usually end up saving time anyway.

This is where I would give you the downside of this more credible and rigorous approach to simple Internet research.

Yeah, nope. None. No one will eat you. No chicken bones through the cage. You are doing it wrong and fairy tales contributed to the persecution of women through witchcraft panics. Really. Why do you think witches have big cooking pots and brooms? Gee .... I wonder who typically used those things in the 15th century .....

I've written before about our often sad research skills and our love of just citing things from the magic internets.

At this moment I am teaching a Professional Speaking class. 4 hours a day. 2 weeks. It's like speaking boot camp. Here's a story from the class that illustrates some of the flaws in how we think about gathering digital knowledge.

It starts with a crumb of knowledge and then we follow the trail. What could go wrong?

|

| https://upload.wikimedia.org/ wikipedia/commons/a/a1/ Hansel_and_Gretel.jpg |

The Crumb

In a conversation about the need for transitions, previews and summaries, all the connective cues that helps audiences follow as we speak, a student, a psychology major, suggested that we can only remember "seven, plus or minus two" things at a time. She did not cite the source of that, and other students nodded at hearing, once again, numbers that had become a kind of "received knowledge" about the world. There is a powerful effect from hearing, again, a number you've heard before. It begins to take on the weight of fact, even if we don't really know if it's true, what Charles Seife, with perhaps apologies to Stephen Colbert, calls "proofiness."

Of course, that kind of persuasion is not really durable. That sort of persuasive cue does not really engage the brain. It is what various two-stage models of persuasion call either "peripheral" or "heuristic" information. Think about the utility of that kind of thing for difficult persuasive tasks like. . .

|

| https://i.ytimg.com/vi/DGyS1GNPG5w/hqdefault.jpg |

The Trail

One student clearly liked that idea and wanted to use it for her speech. Of course, depending on how you Google that, you end up with all sorts of vague things. This student, in her first speech draft, cited "human-memory.net." Okay. Dot-net sounds legit, yeah? She got docked some points on her grade.

Curious, I went to the site and first noted the "Seguin, TX Residents Are 'Rattled' By New Public Records Website" ad embedded in the top paragraph and the "Chakra balancing" ad at the bottom. More legit by the second! Looking further, there's not a citation on the page to any of the research that generates the various "facts" about memory. All the blue-underlined links are to other pages on their site.

I suggested that this site was not credible enough and she was shocked when I told her to go to Wikipedia. She looked like:

|

| https://upload.wikimedia.org/ wikipedia/commons/thumb/f/ f1/Emojione_1F62F.svg/ 2000px-Emojione_1F62F.svg.png |

- Quotations are the only evidence that there is.

- Unless the quote is Wikipedia. THAT's not a thing.

Of course, quotations are the LEAST effective kind of evidence for an argument, but it is easy to grade sophomore English essays built on quotes when grumpy faculty are coming in to fill out rubrics over the summer, so we might as well ruin the analytic skills of a generation of students . . .

Wikipedia is as good as the edits. Look around. Look at the MANY CITATIONS ON THE BOTTOMS OF PAGES. See if they are any good. For some things Wikipedia is great, like the persuasion theory sites I linked before, or the history of Disneyland's Pirates of the Caribbean ride, Cicero's De Oratore, the Berlin Defense in chess, RCA's Photophone or the inflationary universe model of cosmology, especially if you are using it to investigate those citations on the bottom.

The House Made of Candy

In this case, the Wikipedia page for "The Magical Number Seven, Plus of Minus Two" is worth more than any of the sites a Google search would give you in the first 3 and a half pages of the Google list (on the day I wrote this -- it may be better or worse on your day if you try it).

How can you know that? Well, the G. A. Miller study from 1956 that generated this number is cited right off the bat! And there are 15 more cited articles from the testing and development of that idea as it developed over the past 60 years, of which 13 are peer-reviewed.

You can easily find the pdf of the original article plus the arguments and problems with the theory.

Sometimes following the trail is easy, as long as you set aside your habitual mythology of bias.

And, in this case, you can not only get the peripheral/heuristic bump from giving us numbers, the the citation credibility bump from citing a few impressive sounding journal titles. Plus, Miller was at Harvard when he wrote it, which is at least better than average as an institution of higher learning, wouldn't you say?

Of course, I would suggest a better search to begin with, perhaps in a peer-reviewed psychology database, to generate a more nuanced analysis of this figure, but that would in fact be more work, so there's that. But good research does not always mean more work. It is just smarter, better work. More work is always possible, and more work, in the right places, usually does lead to better. But trying to do less work by hopping on the first site in the Google list does not usually end up saving time anyway.

The Witch

This is where I would give you the downside of this more credible and rigorous approach to simple Internet research.

|

| https://upload.wikimedia.org/wikipedia/commons/thumb/2/22/ Hansel_and_Gretel_and_witch_silhouettes.svg/ 637px-Hansel_and_Gretel_and_witch_silhouettes.svg.png |